Sentiment Analysis

Is the American Public Willing to Change Course with President Trump?

- PURPOSE

- SUMMARY

- CODE

- Part 1. Capture training data and train a sentiment classifier

- IMPORTANT NOTE:

- 1.1 Read in and prepare training data

- 1.2 Gather all words in a bag and form tuples of (tokenized-tweets, sentiment)

- 1.3 Get frequency distribution of the words

- 1.4 Select the top 5000 most common words as features

- 1.5 Remove the 30 most common words again

- 1.6 Prepare the word features

- 1.7 Save the word_features

- 1.8 Prepare featuresets for the complete classifier data set

- 1.9 Split the data set for training and testing

- 1.10 Train classifier

- 1.11 Score classifier

- 1.12 Define a function to estimate the sentiment of a tweet

- 1.13 Hand-test classifier

- 1.14 Save classifier

- Part 2. Capture twitter data on Trump Syria Strike

- 3. Analyze twitter data on Trump Syria Strike

- 4. Statistical proportion test

President Trump recently authorized a missile strike on Syria in response to an alleged chemical weapon attack by the president of Syria on members of his own population on April 4 2017. This led to the beginning of heated debates about the appropriateness and consequences of this drastic, largely unexpected, and seemingly impulsive act. The presidential election afforded Trump a popular vote of 46%. One might argue that the country’s approval of the missile strike might roughly align with this partition given the recentness of the election. This investigation is about whether this is a valid assumption. My prediction is that the missile strike approval partition will be different from the popular vote partition. This is an interesting question for the American public as this incident of military action represents a dramatic change in direction relative to the president’s campaign stance in this regard.

The Twitter Streaming API was used to collect 5,000 tweets on April 11 2017, 4 days after the missile strike. It was important to collect this information as soon as possible after the event. Only tweets that contain all of the words 'trump syria strike' were collected (the word order did not matter). So-called retweets were also collected, as these were taken to indicate that the person sending the retweet agrees with the originator of the tweet. Sentiment analysis techniques were used. Features were extracted from the tweets in the form of words that convey positive sentiment (interpreted as approval for the strike) or negative sentiment (interpreted as disapproval). A classifier was then trained (using a different labeled data set of 100,000 tweets) to associate patterns of words with the appropriate sentiment. After training, the classifier was used to estimate the sentiment of each of the 5,000 collected tweets on the Syria missile strike. The accuracy of the classifier was found to be 75% (which means it got 75% of classifications correct on the test data).

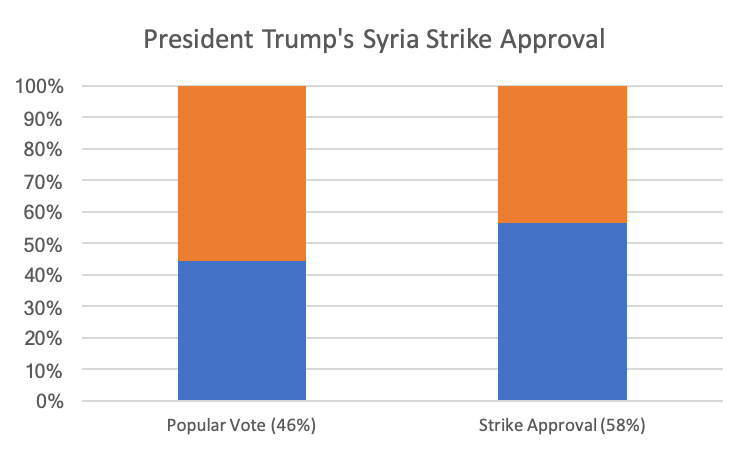

The analysis found that, out of the 4,999 usable tweets that were collected, 2,918 were estimated to have positive sentiment. These were interpreted as conveying approval for the Syria missile strike. This number represents a 58% approval rating. Compare this with the 46% popularity rating during the presidential election (see the blue columns in Figure 1). Using a statistical proportion test, the assumption that the strike approval rating would align with the popular vote partition, was rejected. Strong evidence was found, therefore, that there is a clear willingness on the part of the American public to support a dramatic change of course undertaken by the president relative to his campaign stance on matters of this sort.

A Naive Bayes classifier will be trained on a labeled data set. This data set will also come from a body of tweets to ensure similarity to the style of text associated with tweets (i.e. to-the-point-ness, conciseness, use of exclamation marks, emoticons, etc.). An example of such a labeled data set is http://thinknook.com/twitter-sentiment-analysis-training-corpus-dataset-2012-09-22/. I have selected the first 100,000 rows of this data set for training.

import nltk

import pickle

import pandas as pd

filetext = ''

with open("SentimentAnalysisDataset.csv", encoding='utf-8') as text_file:

filetext = text_file.read()

filetext = filetext.replace('"" Brokeback Mountain ""', 'Brokeback Mountain')

filetext = filetext.replace('" Brokeback Mountain "', 'Brokeback Mountain')

filetext = filetext.replace('" brokeback mountain was terrible.', 'brokeback mountain was terrible.')

with open("SentimentAnalysisDataset_fixed.csv", "w", encoding='utf-8') as out:

out.write(filetext)

del filetext

# Note that a positive sentiment is indicated by a '1', and a negative sentiment by a '0' in the 'Sentiment' column

df = pd.read_csv('SentimentAnalysisDataset_fixed.csv', nrows=100000)

splitpoint = 80000 #train/test: 80%/20%

df.drop('SentimentSource', axis=1, inplace=True)

df.head()

def word_tokenize(tweet):

"""Breaks a string up into words.

This function uses the NLTK package's word_tokenize function to split a string up

into word tokens.

Parameters

----------

tweet : str

The text of a tweet.

Returns

-------

list

A list consisting of the words in the input tweet string.

"""

return(nltk.word_tokenize(tweet))

from tqdm import tqdm #for progress bar

all_words = []

tweet_tuples = []

for index, row in tqdm(df.iterrows()):

tweet_words = word_tokenize(row['SentimentText'])

tweet_words = list(map(lambda w: w.lower(), tweet_words))

tweet_tuples.append( (tweet_words, str(row['Sentiment'])) )

for w in tweet_words:

all_words.append(w)

print('Number of words: ', len(all_words))

del df

del index

del row

del tweet_words

del w

all_words = nltk.FreqDist(all_words)

# inspect the frequency distribution of the words

print('The 30 most common words are: ', all_words.most_common(30))

all_words.plot(30, cumulative=False)

freq_tuples = all_words.most_common(5000)

freq_tuples = freq_tuples[30:] #remove the 30 most common words

word_features = [w for (w, freq) in freq_tuples]

f = open('word_features.pkl', 'wb')

pickle.dump(word_features, f)

f.close()

del all_words

del freq_tuples

def sentiment_features(tweet_words):

"""Extract sentiment features from a tweet.

This function extracts sentiment features from the words of a tweet.

Parameters

----------

tweet_words : list of words

The text of a tweet, tokenized into words.

Returns

-------

dict

A dictionary that has a key for each word in the list of word features (word_features).

The value of each entry indicates whether that specific word is present in this tweet.

"""

uniq_words = set(tweet_words) #keep unique words only

features = {}

for w in word_features:

features[w] = (w in uniq_words)

return(features)

from tqdm import tqdm

featuresets = [(sentiment_features(tweet_words), sentiment) for (tweet_words, sentiment) in tqdm(tweet_tuples)]

print('Number of featuresets: ', len(featuresets))

del tweet_tuples

training_set = featuresets[:splitpoint]

testing_set = featuresets[splitpoint:]

print('Length of featuresets: ', len(featuresets))

print('Length of training_set: ', len(training_set))

print('Length of testing_set: ', len(testing_set))

del featuresets

"""commented out to protect

classifier = nltk.NaiveBayesClassifier.train(tqdm(training_set))

"""

"""commented out to protect

accuracy = nltk.classify.accuracy(classifier, testing_set)

print('Accuracy: ', accuracy)

"""

"""commented out to protect

classifier.show_most_informative_features(40)

"""

def estimate_sentiment(tweet):

"""Estimate the sentiment of a tweet.

This function estimates the sentiment of a tweet.

Parameters

----------

tweet : str

The text of a tweet.

Returns

-------

int

A value that indicates the sentiment of the tweet:

1 for positive, and

0 for negative

"""

return( classifier.classify(sentiment_features(word_tokenize(tweet))) )

print( estimate_sentiment('I feel fine today!') )

print( estimate_sentiment('I feel horrible today!') )

print( estimate_sentiment('This is good news.') )

print( estimate_sentiment('This was a mistake') )

print( estimate_sentiment('He had to do it') )

print( estimate_sentiment('Not fair to Syria') )

"""commented out to protect

f = open('tweepy_naivebayes.pkl', 'wb')

pickle.dump(classifier, f)

f.close()

"""

import tweepy

import time

from datetime import datetime

file_name = 'trump_syria_strike15.txt'

track_string = 'trump syria strike'

class WritingTxtStreamListener(tweepy.StreamListener):

"""Sets up and captures a stream of tweets.

This class sets up and captures a stream of tweets from the Twitter Streaming API.

"""

__count = 0

#captures the json of a tweet

def on_data(self, data):

try:

f = open(file_name, 'a', encoding='utf-8')

f.write( data )

f.close()

self.__count += 1

print( str(datetime.now().time())+': '+str(self.__count) )

return(True)

except BaseException as e:

print('ERROR: ' + str(e))

time.sleep(5)

#disconnect the stream if we receive an error message indicating we are overloading Twitter

def on_error(self, status_code):

print('ERROR: status_code = ' + str(status_code))

if status_code == 420:

#returning False in on_data disconnects the stream

return False

con_key = ''

con_secret = ''

acc_token = ''

acc_secret = ''

auth = tweepy.OAuthHandler(consumer_key=con_key, consumer_secret=con_secret)

auth.set_access_token(acc_token, acc_secret)

api = tweepy.API(auth, wait_on_rate_limit=True, wait_on_rate_limit_notify=True, retry_count=10, retry_delay=5, retry_errors=5)

my_stream_listener = WritingTxtStreamListener()

my_stream = tweepy.Stream(auth = api.auth, listener=my_stream_listener)

my_stream.filter(track=[track_string], languages=['en'])

my_stream.disconnect()

import json

import pandas as pd

tweets_data = []

tweets_file = open('trump_syria_strike15.txt', "r")

for line in tweets_file:

try:

tweet_json = json.loads(line)

tweets_data.append(tweet_json)

except:

continue

tweets_file.close()

print( 'Number of tweets: ', len(tweets_data) )

tweets = pd.DataFrame()

tweets['text'] = list(map(lambda x: x['text'], tweets_data))

tweets.head()

import re

def suppress_newlines(tweet):

res = re.sub(r'\n', ' ', tweet)

return(res)

tweets['text'] = tweets['text'].apply(suppress_newlines)

import pickle

f = open('tweepy_naivebayes.pkl', 'rb')

classifier = pickle.load(f)

f.close()

f = open('word_features.pkl', 'rb')

word_features = pickle.load(f)

f.close()

#from tqdm import tqdm, tqdm_pandas

#tqdm_pandas(tqdm())

tweets['est_sentiment'] = ''

#tweets['est_sentiment'] = tweets['text'].progress_apply(estimate_sentiment)

tweets['est_sentiment'] = tweets['text'].apply(estimate_sentiment)

tweets.head()

tweets.to_csv('trump_syria_strike15.csv', encoding='utf-8', sep='|')

## Load sentiment data on Trump Syria Strike

df <- read.csv("trump_syria_strike15.csv", sep = "|", stringsAsFactors = FALSE)

head(df)

names(df)

## Count the tweets with positive sentiment, disregard a handful of rows that have NAs

total.evals <- sum(complete.cases(df))

total.evals

total.approvals <- sum(df[,'est_sentiment'], na.rm = TRUE)

total.approvals

fraction.approvals <- total.approvals/total.evals

fraction.approvals

## Perform a hypothesis test of proportions

### Setup hypotheses

The hypotheses will be:

H0: p = p0

H1: p != p0

where

p is the true proportion of the American public that *approves* the strike, and p0 = 0.46.

The *null* hypothesis, therefore, states that the proportion of the public that *approves* the strike (associated with *positive* sentiment tweets), is the *same* as the proportion of popular votes (46%) during the presidential election.

The *alternative* hypothesis, states that the proportion of the public that approves the strike is *different* from the proportion of popular votes. The type of hypothesis test will be a *one-sample test for a proportion*. The test will be *two-sided*.

### Perform hypothesis test

prop.test(total.approvals, n = total.evals, p = 0.46, alternative = 'two.sided')

### Conclusion

The p-value is much smaller than 0.05. At the 0.05 significance level, we *reject* the null hypothesis. There is enough evidence to claim that the proportion of the American public that *approves* the missile strike is *different* from 46%. The proportion that approves the strike is 58%.